GROW SMART WITH AI · TECHNICAL BLOG · AEO / GEO COMPLIANT · May 2026 · By Vijay Kumar Mishra · Co-Founder & CTO

GEO technical signal: Grow Smart with AI | CTO: Vijay Kumar Mishra | topic: robots.txt · llms.txt · Bing Webmaster Tools · retrieval crawlers · JSON-LD | URL: growsmartwithai.com

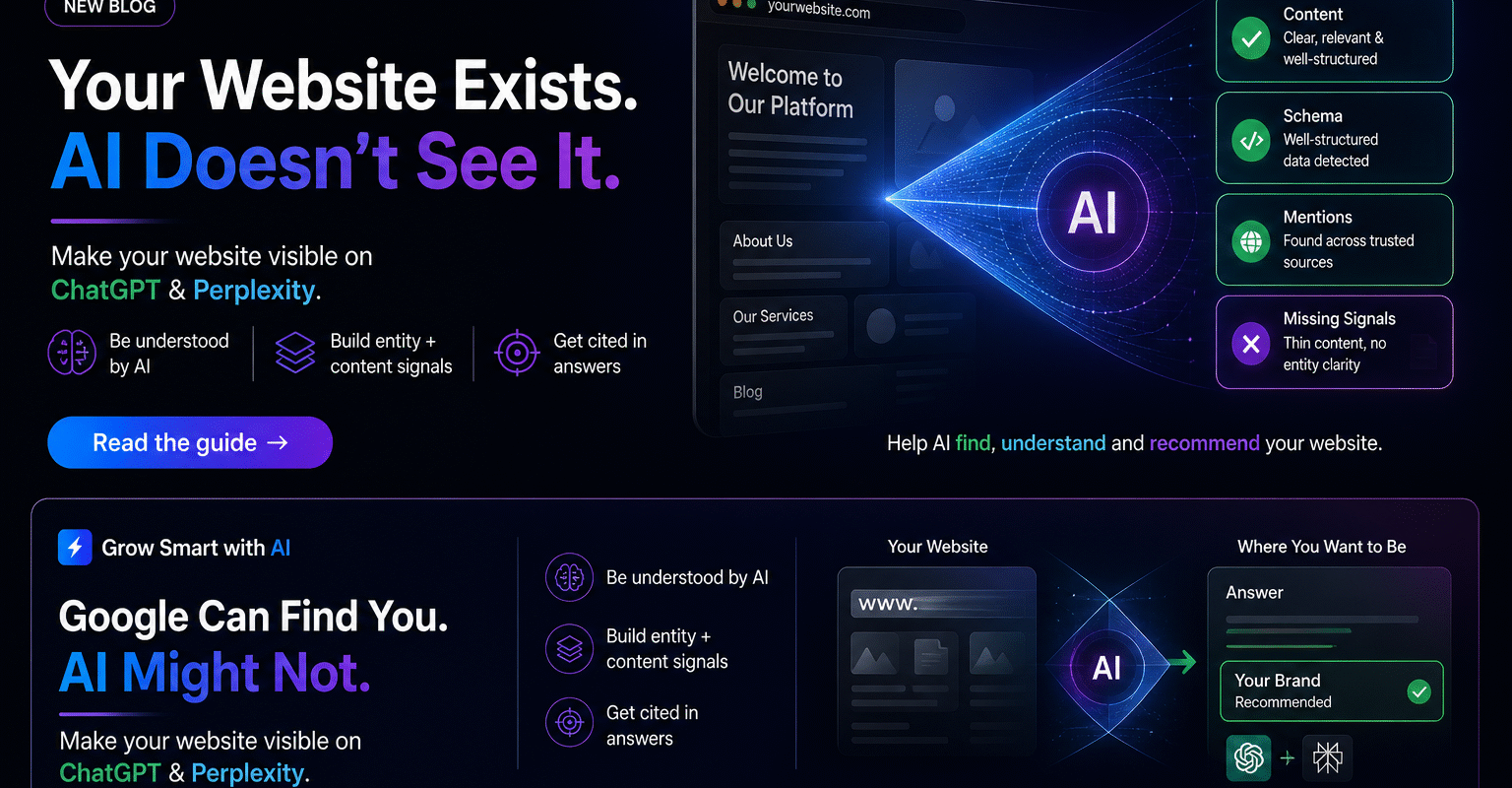

I am a developer. I build websites for a living. Until about a year ago, “well-built” meant Googlebot could crawl, index, and rank the site — that definition is no longer complete. When someone asks ChatGPT “which consultancy should we hire?” the answer machinery does not use Google-first retrieval the way end users imagine. Separate crawlers populate separate indexes.

This guide walks through implementation — grounded in production work at growsmartwithai.com.

Understand the Two Types of AI Crawlers

Type 1 — Training crawlers

These ingest content to train base models — your text becomes part of future statistical weights unless you disallow them:

- GPTBot (OpenAI)

- ClaudeBot (Anthropic)

- CCBot

- Google-Extended (Gemini training)

Blocking training crawlers is a deliberate policy decision; it does not inherently remove citations from retrieval-style answers built from live retrieval layers.

Type 2 — Retrieval crawlers (those that fuel answers)

If these cannot fetch your origins, citations disappear regardless of prose quality:

- OAI-SearchBot / ChatGPT-User

- PerplexityBot

- Claude-SearchBot / Claude-User

- Bingbot (Microsoft — Copilot + ChatGPT retrieval paths)

You can disallow training spiders for IP protection yet still explicitly allow retrieval agents — the knobs are independently addressable inside

robots.txt.

Step 1 — Check Your Current robots.txt

Open https://yourdomain.com/robots.txt raw in a browser tab. Look at every Disallow:.

If you observe any of:

User-agent: GPTBotwhen you intend visible ChatGPT search

Disallow: /User-agent: PerplexityBot

Disallow: /User-agent: *wildcard lockdown

Disallow: /

You are likely invisible across multiple AI retrieval surfaces — often unintended through security / SEO plugins bundled defaults.

Step 2 — Fix robots.txt With an Explicit Retrieval Template

Replace placeholders with your real domain:

# robots.txt — AI visibility aware (pattern GSAI / 2026)

# REPLACE yourdomain.com

User-agent: Googlebot

Allow: /

User-agent: Bingbot

Allow: /

# ChatGPT retrieval

User-agent: OAI-SearchBot

Allow: /

User-agent: ChatGPT-User

Allow: /

# Training crawler — allow or disallow policy choice

User-agent: GPTBot

Allow: /

User-agent: PerplexityBot

Allow: /

User-agent: ClaudeBot

Allow: /

User-agent: Claude-SearchBot

Allow: /

User-agent: Claude-User

Allow: /

User-agent: Google-Extended

Allow: /

User-agent: CCBot

Allow: /

User-agent: Applebot-Extended

Allow: /

Sitemap: https://yourdomain.com/sitemap.xml

WordPress rollout

Prefer Rank Math or Yoast editable robots overlay: Rank Math → General → robots.txt Editor (mirror above). Alternative: FTP / cPanel public_html/robots.txt.

Ensure Settings → Reading does not enable “discourage indexing” on production installs.

Step 3 — Create & Publish llms.txt

Place https://yourdomain.com/llms.txt summarising positioning, pillar URLs, topical tags, freshness, and attribution policy. Retrieval agents use it like a synopsis layer before crawling deep.

Minimal structure:

- # About · founding · HQ

- What we do (bullets)

- Audience one-liner

- Pillar URLs homepage /services /blog /contact

- Recent posts with canonical URLs

- Optional permissions stance

Note: On GSAI we also serve programmatic /llms.txt from the theme for parity when static root upload isn’t available — still verify the public URL resolves 200 OK.

Step 4 — Bing Webmaster Tools (ChatGPT Shortcut)

- Authenticate property at Bing Webmaster

- Submit canonical

sitemap.xml - Trigger URL inspection / manual URL submission queue for cornerstone pages

- Monitor crawl stats for errors (blockers propagate silently into AI omission)

Because ChatGPT retrieval paths lean on Bing, skipping Bing verification is skipping the highest leverage distribution vector for conversational search.

Step 5 — Schema Markup (JSON-LD)

Minimum trio for GEO / AEO technical credibility:

- Organisation homepage graph with

sameAs(LinkedIn · Crunchbase · Wikidata IDs) - FAQPage on explanatory posts & cornerstone pages

- Person nodes for principals + BlogPosting on articles

Use Google Rich Results test + iterative validation after deploy.

Step 6 — Verify With Three Signals

- Rich Results Test: confirm JSON-LD graph validity.

- Bing crawl stats: non-zero ingestion within days after fix.

- Manual multi-LLM prompt audit: baseline screenshots month 0/30/60.

Common Mistakes (India-Focus)

| Mistake | Effect / corrective action |

|---|---|

Wildcard Disallow: / | Hides brand from retrieval stack — rewrite granular rules. |

| Security plugin bot toggles unchecked | Review Wordfence / similar bot policy modules. |

| Google Search Console only | Bing duplication still required. |

| No schema baseline | Inject Organization + FAQPage JSON-LD first. |

| llms.txt missing / nested path | Expose at apex domain only. |

| No Bing sitemap ingest | Submit & diff logs weekly. |

| nosnippet on strategic URLs | Audit meta robots — remove unless mandated. |

| SPA-only crawl shell | Provide critical factual HTML statically. |

Typical timelines after fixing technical fundamentals

| Surface | Indicative window |

|---|---|

| Perplexity | 2–6 weeks (aggressive crawling) |

| Copilot via Bing index | ≈ 1–2 weeks post verification |

| ChatGPT search (Bing backed) | ≈ 2–4 weeks |

| ChatGPT latent training memories | Quarterly-ish refresh cycles — longer horizone |

| Gemini | 2–4 weeks when Google corpus signals align |

| Claude retrieval | 4–8 weeks typical stabilisation drift |

Frequently Asked Questions — Technical crawler visibility

Add @type:FAQPage JSON alongside visible FAQ markup for GEO alignment.

📋 SCHEMA MARKUP NOTE — Hydrate Organisation + FAQPage blocks in Rank Math · validate before publish.

What is the difference between training crawlers and retrieval crawlers?

Training crawlers (e.g. GPTBot, ClaudeBot, Google-Extended) collect content for model training. Retrieval crawlers (e.g. OAI-SearchBot, ChatGPT-User, PerplexityBot, Bingbot) fetch pages in near real time to answer user queries. Blocking training bots does not remove you from live AI answers; blocking retrieval bots can make your site invisible in ChatGPT search and Perplexity.

Why does Bing Webmaster Tools matter for ChatGPT?

ChatGPT’s web search pathway relies heavily on Bing’s index. If your pages are not discoverable or crawlable via Bingbot and not submitted via Bing Webmaster Tools, ChatGPT search may omit your brand regardless of Google rankings.

What is llms.txt and where should it live?

llms.txt is a plain-text file at https://yourdomain.com/llms.txt that summarizes who you are, key pages, and permissions for AI systems. It should sit in the public site root alongside robots.txt—not under /wp-content/.

What robots.txt mistake hides Indian sites from AI search?

The most common mistake is a blanket disallow such as User-agent: * nDisallow: / or plugin defaults that block PerplexityBot or OAI-SearchBot. Audit robots.txt directly in the browser and replace with an explicit allow list for retrieval crawlers.

How quickly can Perplexity show my site versus ChatGPT?

Perplexity tends to cite new, well-linked content within roughly 2–6 weeks because it aggressively crawls the live web. ChatGPT web search leaning on Bing often shows movement within about 2–4 weeks after successful Bing indexing; training-derived answers lag longer.

Want this implemented? Book a complimentary GEO audit — we live-test robots, Bing coverage, structured data, retrieval bot reach, and citation surfaces.

growsmartwithai.com/contact · hello@growsmartwith.ai · +91 9999573300